How AI is Accelerating the Discovery and Synthesis of Graphene Materials

The convergence of Artificial Intelligence (AI) and graphene research is fundamentally changing how scientists approach the design and production of carbon-based nanomaterials. By leveraging massive datasets and predictive modeling, researchers are moving away from the slow, trial-and-error methods that have historically hindered the commercial scaling of 2D materials.

While the potential of graphene has been understood for decades, the transition from lab-scale experimentation to reliable industrial manufacturing remains a complex challenge. Today, AI models are being deployed to predict how specific production parameters affect structural quality, enabling a faster route to high-performance, application-ready graphene. It is important to note that while these AI-driven workflows are advancing rapidly, they remain subject to experimental validation in physical labs.

Key Takeaways

- Accelerated Discovery: AI algorithms can screen thousands of potential material variations in seconds to identify the best candidates for specific applications.

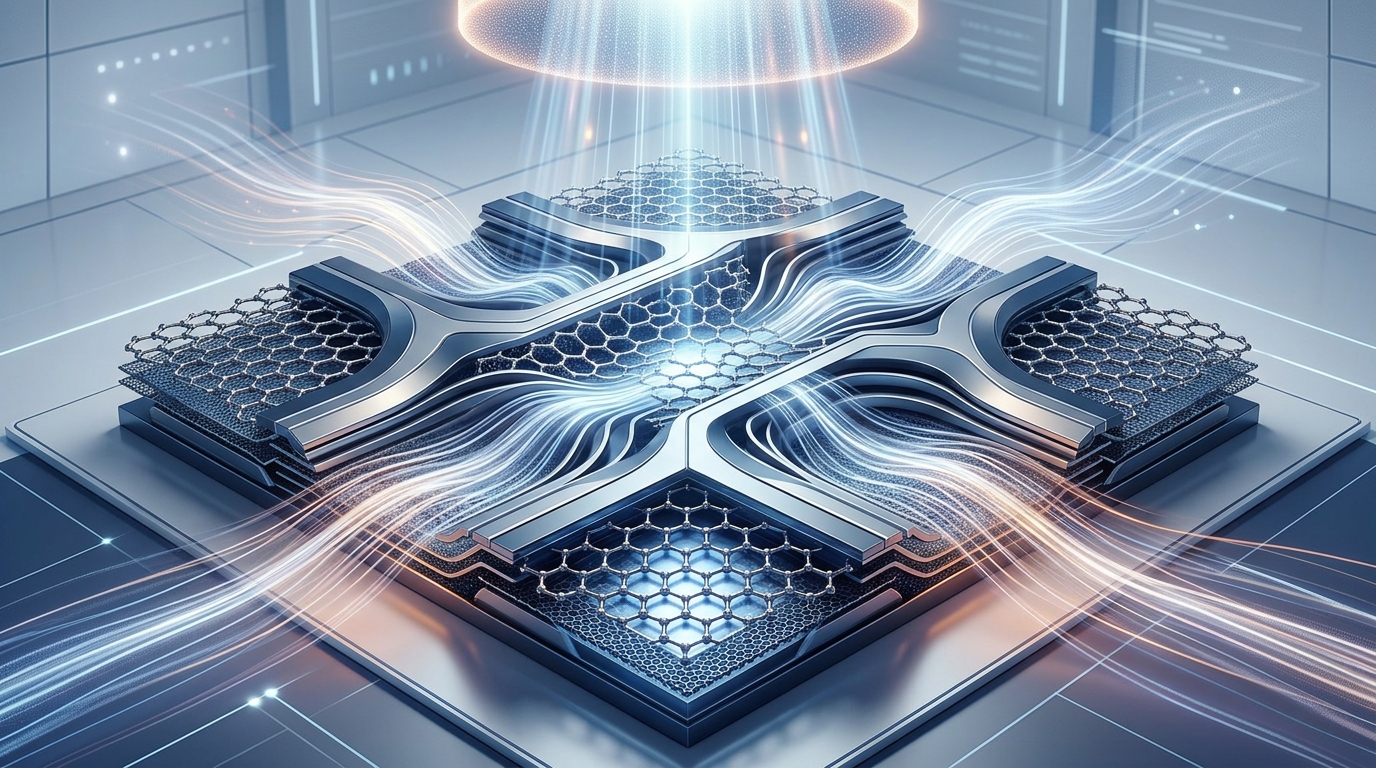

- Process Optimization: Machine learning is being used to fine-tune synthesis techniques like Chemical Vapor Deposition (CVD) to reduce defects.

- Data-Driven Scaling: AI helps bridge the gap between small-batch research and industrial-grade production by predicting outcome stability.

- Cost Reduction: By optimizing energy usage and material inputs, AI-assisted synthesis aims to lower the long-term cost of high-quality graphene.

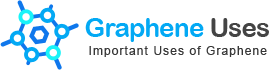

How AI Enhances Graphene Synthesis

Traditional graphene synthesis often involves complex, multi-step processes where slight changes in temperature, pressure, or precursor gases can lead to significant variations in the final material quality. AI models, particularly those using machine learning (ML), ingest historical data from thousands of previous synthesis attempts to map out the ideal environmental conditions for growth.

By constantly monitoring live production data, AI systems can make real-time adjustments, ensuring that the crystalline structure of the graphene is optimized for the intended purpose, such as conductivity or mechanical strength. This predictive capability is essential for scaling production while maintaining strict quality control standards.

Predicting Properties and Performance

Beyond manufacturing, AI is revolutionizing the computational discovery of new graphene derivatives and composites. Scientists are using deep learning architectures to simulate how graphene interacts with other molecules at the atomic scale.

This virtual testing allows researchers to predict the performance of a material before it is even manufactured. Whether the goal is to enhance battery electrodes, create more sensitive biosensors, or develop stronger structural materials, AI provides the framework to narrow down the most promising chemical configurations, saving months of laboratory time.

Industry Impact and Future Outlook

As the graphene industry matures, the integration of AI-assisted material informatics is becoming a competitive advantage. Companies that adopt these computational tools are better positioned to tackle the demand for high-purity, application-specific graphene. We expect to see more partnerships between software developers and material science firms in the coming years, focusing on automated labs that integrate AI with robotics for “self-driving” experiments.

Frequently Asked Questions

Can AI synthesize graphene physically?

AI does not physically create the material; rather, it provides the precise instructions, parameter sets, and optimization strategies that robotic systems or human researchers use to synthesize high-quality graphene more efficiently.

Does AI replace human researchers?

No, AI serves as an advanced tool that handles massive data analysis and pattern recognition, allowing human scientists to focus on experimental design, ethical considerations, and innovative conceptual breakthroughs.

How reliable are AI predictions for new materials?

AI models are highly accurate at interpolation based on existing data, but they must always be verified by experimental testing. As training datasets grow, the reliability of these predictions continues to improve significantly.

Editorial Disclaimer

This article is provided for educational and informational purposes only. Details can change over time, so readers should verify important information with official sources, qualified professionals, manufacturers, publishers, or relevant authorities before making decisions.